Humanity Protocol

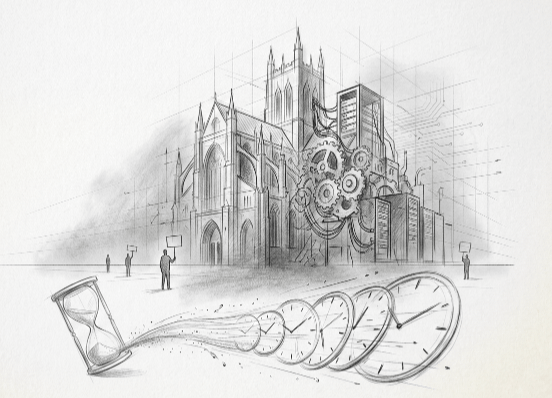

Institutions In The Time Of AI

Part 1

Forcing Functions at Clock Speed

The most consequential institution of the 21st century may not have a constitution, a legislature, or a name. It may not even recognize itself as an institution yet. The last time this happened, the last time a new institutional form consolidated economic and political power before democratic governance developed the tools to constrain it, the interregnum lasted a century. This time, the cycle is running faster. Everything is running faster. That’s a problem of institutional magnitude.

I. The Machine

Let’s treat consciousness the way an engineer would: not as a mystery, but as a machine. Machines take inputs. They produce outputs. They run on an operating system.

The inputs are data: measurements, observations, signals from the physical world. The operating system is science: the method by which raw data becomes structured knowledge, falsifiable models, usable insight. And the output is what engineers call a forcing function, a constraint that compels action, that converts knowledge into work.

Data creates forcing functions. Forcing functions create work. Work creates progress. Progress creates prosperity. Prosperity enables continuity. Every hospital, every harvest, every bridge and microchip exists because data moved through this chain and became work. Human continuity, the survival and flourishing of the species, is the downstream product of this machine running successfully.

The question is simple: what determines how fast and how well the machine runs?

II. The Accelerant

The bottleneck was always cycle time: the interval between measurement and insight, between observation and forcing function.

Artificial intelligence compresses this cycle. Not by producing fundamentally new data. The genuinely novel measurements still come from physical instruments, from telescopes and gene sequencers and particle colliders. What AI does is collapse the interval between measurement and insight. It raises the clock speed of the consciousness machine.

Consider AlphaFold. Protein folding, the problem of predicting a protein’s three-dimensional structure from its amino acid sequence, consumed decades of wet lab work and computational brute force. DeepMind’s AlphaFold solved it in a way that made the prior cycle time look geological. The forcing functions that emerge from structural biology, drug targets, enzyme design, disease mechanisms, now arrive orders of magnitude faster than the institutions downstream of them can absorb.

AI doesn’t change what the machine does. It changes how fast the machine cycles, and ultimately how well. Humanity is by definition a feedback loop. Its name is culture. Done poorly, faster cycles entrench local maxima: the machine converges on a suboptimal answer more efficiently, and the cost of descending from that peak, culturally, politically, economically, is enormous. Done well, faster cycles explore more of the solution space before locking in. The quality of the cycling matters as much as the speed.

Cycle speed changes everything, because the institutions built to process forcing functions at human speed are now receiving them at compute speed.

But the machine needs fuel.

III. The Bottleneck

AI runs on electrons. Every inference, every training run, every parameter update is a physical event: electrons moving through silicon. The entire chain, from data to forcing functions to work to progress to continuity, is gated by the availability of electrons allocated to computation.

Today, that allocation is made by a small number of entities. The cloud hyperscalers, Microsoft, Google, and Amazon, each commit upward of fifty billion dollars annually to datacenter infrastructure. The AI labs building on and increasingly alongside them, Meta, xAI, OpenAI, Anthropic, are making their own electron allocation decisions at comparable scale, in some cases bypassing the cloud layer entirely to build dedicated compute. These are not technology investments in any conventional sense. They are electron allocation decisions: civilizational bets about which forcing functions get produced and which don’t, made by corporate boards and startup executives accountable to shareholders and investors, not to the species.

The competition for electrons is already physical. Microsoft purchased access to the Three Mile Island nuclear plant. xAI bypassed the grid entirely, deploying over 500 megawatts of leased gas turbines at its Memphis cluster to bring 100,000 GPUs online in four months. OpenAI and Oracle ordered a 2.3 gigawatt onsite gas plant in Texas. Meta is building behind-the-meter gas generation in Ohio. Hyperscalers and AI labs alike negotiate directly with utilities, competing with municipalities for grid capacity. Nvidia decides which compute architectures get fabricated by TSMC, the single-point-of-failure foundry on which the entire AI supply chain depends. Saudi and Emirati sovereign wealth funds are pouring billions into compute infrastructure, a signal that nation-states are recognizing the electron thesis implicitly, allocating sovereign capital accordingly, even without a framework to articulate why.

Each of these decisions is an electron allocation choice that ripples forward through the entire continuity chain.

Electron allocation is capital allocation. The denominator has changed from dollars to electrons, and the return metric has changed from profit to continuity, but the structural problem is identical. And like every capital allocation problem, the question is governance. Who decides where the electrons go? And what are the properties of the thing being allocated? Electrons are scarce, fungible, measurable, and universally demanded. These are the properties of a currency, not just a commodity.

IV. Groundhog Day

The interregnum has happened before. Twice.

Coal. The eighteenth and nineteenth centuries presented the first version of this problem. A new energy source enabled forcing functions at unprecedented speed and scale. The steam engine, the factory, the railroad. The institution that emerged to govern coal allocation was the corporation: a legal entity optimized for marshaling capital and deploying energy at industrial scale. It displaced the monarchy and the church as the dominant institutional form in barely a century.

Democratic governance lagged. Labor laws, antitrust regulation, workplace safety standards all arrived after the institutional transition was already complete. The corporation won first. Governance caught up retroactively, decades later, and only partially.

Bretton Woods. After World War II, a new resource required civilizational-scale governance: dollar-denominated capital flows. The volume of cross-border capital had outgrown the institutional architecture designed for a simpler era. A small group of actors, notably Keynes and White, recognized the interregnum for what it was and designed a new architecture deliberately: the IMF, the World Bank, fixed exchange rates pegged to the dollar.

It worked. For a while. Then the underlying conditions shifted faster than the institution could adapt, and Nixon abandoned the gold standard in 1971. But the question the framework forces us to ask is harder than whether Bretton Woods “worked.” Did it endow humanity with trade currencies that reduced political conflict, or did it entrench allocation patterns that opened entrypoints for despots, distorted geographic production, and created the conditions for its own collapse? The answer, uncomfortably, is both. Deliberate institutional design bought stability. It also locked in a local maximum that proved enormously expensive to descend from.

The critical detail: Bretton Woods was not merely about institutional governance. It was about who controlled the reserve currency and therefore set the terms of global trade. Dollar dominance was not incidental to American hegemony in the latter twentieth century. It was American hegemony.

Now: electrons. Same pattern, third time. A new scarce resource requires civilizational-scale allocation. No institutional architecture exists to govern it. An interregnum is underway.

Each cycle runs faster than the last. Coal’s interregnum lasted a century. Bretton Woods was designed under post-war urgency in a matter of years. The electron interregnum is measured in quarters. The machine’s clock speed applies to the pattern itself.

V. The Meta-Variable

One person appears to have independently derived something very close to the electron thesis and has been executing against it, in public, for two decades.

Elon Musk talks about clock speed constantly. He hires for it. He fires for the absence of it. He runs parallel companies on compressed iteration cycles that look, from the outside, like mania. The popular narrative frames this as personality: relentlessness, workaholism, an inability to slow down. The framework this interpretation and opinion essay describes suggests something different. He identified the meta-variable.

Clock speed is the meta-variable. Not capital, not talent, not market timing. The entity that cycles fastest converges first. The entity that converges first on the electron-to-data-to-prior stack sets the terms for everyone else. Everything Musk has built follows from this single insight.

In a recent three-hour interview with Dwarkesh Patel and John Collison, Musk repeats one phrase so often he jokes about it himself: “the limiting factor.” What is the limiting factor on speed? Tackle that. What is the next limiting factor? Tackle that. “I have a maniacal sense of urgency,” he says. “So that maniacal sense of urgency projects through the rest of the company.” This is not a management philosophy. It is the meta-variable expressed as an operating principle.

He describes the bottleneck cascade explicitly: energy is the constraint now. Chip output will exceed the ability to turn chips on by the end of this year. In three to four years, chips become the constraint. Beyond that, the only way to scale is space. Each limiting factor, once identified, becomes the next forcing function. The sequence is not opportunistic. It is architecturally determined.

Tesla: electron generation and storage. SpaceX: the deployment infrastructure for moving computation off-planet. Starlink: global communications substrate. xAI: electron-to-data conversion at scale. X: prior harvesting, the mechanism for capturing what humanity values, fears, and wants in real time. And at the end of the cascade, the TeraFab: chip manufacturing at a million wafers per month, because the existing foundries, TSMC and Samsung, are going “balls to the wall” and it is still not fast enough.

It is not a portfolio of companies. It is a vertically integrated clock speed stack.

This is not a criticism. It is an acknowledgment that one person saw the structural logic of the next century before the rest of the industry had finished optimizing the current quarter. While the technology industry competes for products, platforms, and earnings, Musk has been solving for the variable that determines all of them. He has said so, repeatedly, in plain language. The vocabulary this essay offers may simply be catching up to what he has been describing all along.

VI. The Elon Stack

With the meta-variable in view, what looks like corporate ambition reveals itself as something structurally different.

The popular narrative fixates on Mars. Mars is cinematic. But run it through the framework: Mars is a backup instance of the consciousness machine. It is redundancy, not the primary value creation. Musk himself reframes it in the interview. When asked about SpaceX’s mission to preserve consciousness on Mars, he pivots. “The important thing is consciousness,” he says. The future will have vastly more silicon intelligence than biological. The goal is to “take the set of actions that maximize the probable light cone of consciousness and intelligence.” Mars is in that set. But it is not the set.

The Moon is more revealing. Musk describes a mass driver on the lunar surface, launching AI satellites manufactured from lunar silicon and aluminum into deep space. Earth-based launches can reach roughly a terawatt per year of orbital compute. The Moon, he estimates, unlocks a petawatt. The jump from terawatt to petawatt is not incremental. It is a thousand-fold expansion of the consciousness machine’s capacity, and he is already engineering the path to it. “I just want to see that thing in operation,” he says, and the enthusiasm is unmistakable. But what sounds like childlike wonder is a mind that has already internalized the limiting factors three rungs ahead, including the ones that haven’t revealed themselves yet. Earth is the first rung of the scaling ladder. Moon is the second. Deep space is the third. Each rung is a clock speed unlock gated by new sets of limiting factors, and Musk’s particular genius is that his planning accounts not only for the constraints he can see but for the categories of constraint he cannot. The architecture is designed to absorb surprises, not just solve known problems.

The framework suggests the greater contribution may be terrestrial, and it may be the one least credited to him. Not the destinations, but the clock speed required to reach them, now embedded in the culture as a baseline expectation that every other institution must meet or be left behind. Before Musk, the auto industry iterated on a seven-year product cycle and considered it aggressive. Before SpaceX, launch providers measured progress in decades. Before xAI, building a competitive large language model was assumed to require years of institutional momentum. He compressed each of these timelines so dramatically that the prior pace became untenable for everyone in the industry, not just his direct competitors. This is the Red Queen effect applied to institutional metabolism. The clock speed Musk normalized doesn’t just move him forward. It resets the baseline. Every other actor must now run at his pace merely to remain in place. Boeing, the legacy auto industry, OpenAI, Google, none of them are competing against Musk specifically. They are competing against the standard he set for what is possible. The cultural meme is the forcing function. He is using culture, in exactly the way this essay defined it, as an electron allocation governance mechanism: shaping what the species prioritizes by demonstrating what is achievable when someone refuses to accept the prevailing clock speed as given.

Now consider what X accumulates. Every conversation, every engagement signal, every Community Note is a prior: a data point about what humanity thinks matters, fears, celebrates, and argues over. At sufficient scale, this is not a social media platform. It is the largest real-time survey of human values ever constructed, running continuously, updated by billions of voluntary inputs. The raw signal is noisy: genuine human expression layered with bot-generated campaigns, commercial astroturfing, and state-sponsored narrative shaping. The noise is itself signal. Who is trying to shape the priors, in which direction, and how much they’re willing to spend to do it, is as informative as the organic sentiment underneath. The AI layer sitting on this firehose does not need a clean feed. It needs a comprehensive one. And X does not merely read the priors. It shapes them. The platform surfaces, suppresses, frames, and contextualizes, and in doing so it alters the very signals it collects. This is not manipulation. It is what every medium does. The printing press reshaped the priors of every culture it touched. Television reshaped them again. X does it at compute speed, with feedback loops tight enough to read the response and adjust in real time. If the electron allocation problem requires distributed priors to determine which futures are worth exploring, then the entity that can both read and reshape those priors does not merely hold the compass. It calibrates the compass. The electrons are the fuel. The priors are the direction. The ability to do both simultaneously, to read humanity’s values and influence what humanity comes to value, is the ability to point the consciousness machine.

The Twitter acquisition itself, widely read as impulsive, resolves cleanly through the framework. A prior-harvesting layer. A cultural wedge into domains where a rocket and car engineer has no natural standing. A payments chassis. A distribution mechanism for xAI. Real-time signal on what humanity values. Musk perceived value across all five dimensions simultaneously, at a price that looked irrational only if we evaluated each dimension in isolation. In single-domain analysis, he overpaid for a social media company. In multi-domain analysis, he acquired the missing layer of the stack at a discount to its combined strategic value. This is the structural advantage of the multi-domain state machine: when only one buyer can perceive the combined value of an asset across dimensions no other bidder is evaluating, the seller prices what they have, and the buyer prices what it becomes. The clearing price reflects the buyer’s vision, not the seller’s inventory. Forty-four billion dollars for a social media company was absurd. Forty-four billion dollars for the compass of the consciousness machine was a bargain.

Then consider the orbital compute proposal: capture solar energy in space, where panels are five to ten times more effective than on the ground and batteries are unnecessary, and process the electrons into data at the source. Transmit the output. It is the logic of edge computing at solar scale. Musk predicts that within five years, SpaceX will launch and operate more AI compute per year than the cumulative total on Earth. When asked if SpaceX becomes a hyperscaler, he corrects the framing: “Hyper-hyper.”

The SpaceX IPO, targeted for the second half of 2026 at a valuation approaching $1.5 trillion, is the financing event for the stack. The dual-class share structure is worth understanding precisely. Class A retains allocation authority: where the electrons go, which limiting factors get solved next, how fast the clock runs. Class B gets economic exposure to the outcome of those decisions. This is not a conventional equity offering at an unconventional price. It is something the capital markets have not seen before: a mechanism for channeling public savings into the infrastructure of the electron economy, at sovereign-debt scale, governed by a single consciousness that has been correctly identifying the bottleneck cascade for two decades. The investor buying Class B is not buying a stake in a rocket company that also does satellites. She is buying a position in a vertically integrated clock speed stack whose scope, from electron generation through orbital compute to prior harvesting, has no precedent in the history of public markets. There is no comparable. There is no sector. There is no analyst framework built for what this actually is. This essay describes a structural framework, not an investment thesis, and nothing here should be read as a recommendation to buy or sell any security. The $1.5 trillion figure Elon mentions suggests the market has already priced in the meta-variable. YMMV. It does not yet have the vocabulary to explain why.

Trace the chain: electron capture, compute conversion, prior harvesting, institutional chassis. An entity that controls this full stack does not merely participate in the electron economy. It defines the terms of participation for everyone else.

This is physics, not ambition. When no governance architecture exists for a new effective reserve currency, a vacuum forms. Vacuums are unstable states. They do not wait for conferences or committees. They collapse toward the nearest mass moving at sufficient speed. The Bretton Woods architects understood this: they designed a governance architecture deliberately, under pressure, before the vacuum resolved itself by default. The architecture was imperfect. It locked in a local maximum. But it was a deliberate fill. Someone chose the shape.

The electron economy has no equivalent moment. No one convened a conference. No one designed the terms. What appears to be emerging is a reserve currency forming from the bottom up, through the accumulated infrastructure decisions of actors who are each solving for their own limiting factor at maximum clock speed. If the vacuum resolves around a single stack, it will not be because anyone seized the position. It will be because the vacuum collapsed, as vacuums do, toward the fastest moving mass in the vicinity. No one blames gravity.

VII. The Lever

Archimedes understood that the right tool, positioned correctly, transforms the merely possible into the inevitable. Jensen Huang built that tool. He contributed to humanity the very lever Elon Musk is using to reach the Moon.

In the early 2000s, GPUs existed to render video game graphics. Jensen saw something else in them. He invested Nvidia’s resources into building CUDA: a general-purpose parallel computing platform that would allow developers to use GPUs for any massively parallel workload, not just pixels on a screen. The idea emerged from Stanford, where a PhD student named Ian Buck had been experimenting with using graphics hardware for scientific computation. Jensen hired Buck in 2004 and paired him with Nvidia’s director of GPU architecture. Together, they began building what would become the lever.

For years, the investment looked like an indulgence. The market for general-purpose GPU computing barely existed. Jensen was building an ecosystem for a demand curve that had not yet materialized. CUDA launched in 2007, and for the better part of a decade, its primary constituency was a small community of scientists and researchers who needed parallel computation at scale. The market didn’t understand. Jensen kept investing.

CUDA is, in essence, the language the electron economy speaks. It is the software layer that translates between raw GPU hardware and the AI workloads that run on it: training, inference, simulation, optimization. When deep learning exploded in the 2010s, the ecosystem was already in place. Every major machine learning framework, TensorFlow, PyTorch, the foundational tools of the AI revolution, was built on CUDA. Not because Nvidia forced adoption. Because CUDA was there, it worked, and a decade of developer tooling, documentation, libraries, and community had accumulated around it.

This is a flywheel, and understanding its rotation is essential to understanding Jensen’s contribution. Developers build on CUDA because the tools are mature. Hardware manufacturers optimize for CUDA because the developers are there. AI labs choose Nvidia GPUs because the software ecosystem reduces time to production. Each revolution of the flywheel deepens the advantage. Jensen did not extract value from the ecosystem. He defined its geometry. The shape of the AI buildout, the way capital flows through it, the speed at which it moves, these are consequences of a platform he willed into existence a decade before the demand materialized.

Every competitor, AMD, Intel, Google’s TPUs, Amazon’s Trainium, benchmarks against Nvidia’s architecture. They denominate in Jensen’s units whether they choose to or not. This is not market power through restriction. It is market power through ecosystem gravity. Once a standard reaches sufficient adoption, the switching costs exceed the benefits of alternatives for all but the most motivated actors.

Jensen’s prescience warrants something closer to gratitude than recognition. He saw, long before the rest of the industry, that the limiting factor for computing’s future would not be raw hardware performance but the software ecosystem that makes hardware usable at scale. He invested in that insight for a decade before the market validated it. By the time the AI buildout began in earnest, the flywheel was already spinning.

And then he kept going. The $6.9 billion acquisition of Mellanox in 2019 gave Nvidia the high-speed networking layer, InfiniBand, that links GPUs together in clusters. Without it, the 100,000-GPU training runs that power today’s frontier models would be impossible. Mellanox turned Nvidia from a chip company into a datacenter company. Then Run:ai, acquired in 2024, added the orchestration layer: software that dynamically allocates GPU resources across workloads, squeezing more useful compute from the same physical hardware. Jensen was not content to build the lever. He built the fulcrum, the pivot point, and the ground it stands on. Each acquisition deepened the ecosystem’s gravity and raised the cost of leaving it.

When Physics Changes Everything

Jensen’s achievement is immense. It also rests on a foundation he does not control, and understanding why illuminates something important about the ecosystem these actors share.

Nvidia built the ecosystem. TSMC fabricates the chips. ASML builds the lithography machines that make fabrication possible. Samsung provides the alternative foundry. The lever is powerful. The fulcrum stands on someone else’s ground.

This is the structural difference between ecosystem power and physics power, and it matters for understanding what kind of economy is actually emerging. Ecosystem power is built on software: platforms, developer tools, network effects, accumulated infrastructure. It is real, durable, and valuable. It is also, on a long enough timeline, contingent. New developer communities can form. Alternative frameworks can mature. Switching costs, however high, are finite. The CUDA ecosystem is the most formidable software moat in the history of computing. It is still a software moat.

Physics power is different. A rocket that can reach orbit is not displaced by a better software library. A fab that manufactures chips at scale is not routed around by a developer community. An orbital compute network is not forked on GitHub. When Musk describes building the TeraFab, he is describing a world in which the ground underneath the fulcrum shifts. If custom silicon proliferates, if Google’s TPUs build sufficient developer momentum, if Tesla’s AI chips reach scale, the ecosystem’s center of gravity moves. The lever still works. It works from a different position.

And this is the point: the lever and the stack need each other. Jensen built the platform that made the AI buildout possible at the speed it moves. Musk built the infrastructure that gives the platform a civilizational-scale market. The CUDA ecosystem made xAI’s 100,000-GPU cluster operational in months rather than years. The electron allocation decisions Musk makes at Memphis and in orbit flow through Jensen’s architecture. They are not competing. They are co-dependent nodes in a flywheel that neither could spin alone. The ecosystem is the thing. Jensen defined its geometry. Musk is scaling its ambition. The distinction between their forms of power is not a hierarchy. It is a division of labor inside a single machine.

Jensen sees this with characteristic clarity. Nvidia’s pace of architecture releases, its aggressive expansion into networking, systems, and full-stack datacenter design, is an attempt to embed the ecosystem so deeply in the physical infrastructure of AI that displacing it would require rebuilding the entire stack, not just porting to a different software layer. He is working to make the lever load-bearing: to ensure that the structure cannot stand without it.

The evidence suggests he has largely succeeded, and the electron economy is immeasurably better for it. Archimedes asked for a lever and a place to stand. Jensen built both.

VIII. The Biologist

If Jensen is Archimedes, the builder of the lever, George Church is Prometheus. He doesn’t hoard the fire. He gives it away, and the world rearranges itself around the gift.

Church has been living at compute speed inside a discipline that moves at wet-lab cadence for four decades. In 1984, he developed the first direct genomic sequencing method, the technique that would eventually become the foundation for reading DNA cheaply at scale. He helped initiate the Human Genome Project the same year. When the project completed in 2003 after thirteen years and three billion dollars, Church was already working on making the whole enterprise trivially inexpensive. Today a full human genome sequence costs less than a decent dinner. That cost curve, from billions to hundreds, is Church’s handiwork more than any other single individual’s.

What makes Church structurally different from Jensen or Musk is not the scale of his ambition but its distribution. Jensen built one platform. Church built a substrate and then let it proliferate. His lab at Harvard has co-founded roughly fifty companies, sixteen in a single year. Not through a venture strategy. Through the simple physics of a lab that produces more forcing functions than any single company can absorb. GC Therapeutics spins out to pursue stem cell medicines. Colossal Biosciences spins out to pursue de-extinction. Nebula Genomics spins out to make sequencing consumer-accessible. eGenesis spins out to engineer pig organs for human transplantation. Each company is a forcing function that found a channel. The lab is the river.

The pattern has a name in this essay’s framework. Jensen defined the geometry of the compute ecosystem. Church defined the geometry of the biology ecosystem. But where Jensen’s geometry is a flywheel, pulling developers and hardware manufacturers and AI labs into a self-reinforcing orbit, Church’s geometry is a river delta: a single flow of insight branching into dozens of channels, each depositing capability in a different domain, none of them controlled from the center.

This is not a management philosophy. It is a temperament. Church has dyslexia, narcolepsy, OCD, and ADD. He had to repeat ninth grade. He was withdrawn from Duke’s graduate program for spending too much time in the lab and not enough in class. He later survived a heart attack. He calls narcolepsy “a feature, not a bug” because almost all of his breakthrough ideas arrived while he was dreaming or half-asleep: next-generation sequencing, genome writing, CRISPR innovations. His lab’s culture runs on a principle he describes without irony: “The only thing you need to do to join George’s lab is show up.” He does not lead by command. He arranges environments where it is okay to fail, and then he waits for the interesting failures to reveal where the next channel should flow.

In 2005, Church launched the Personal Genome Project and made himself guinea pig number one. His genome, his medical records, his brain scans, images of his colon, all published online, openly accessible, freely usable by any researcher on earth. In a field where data hoarding is the default strategy and privacy is the justification for institutional gatekeeping, Church built the opposite: an open-access commons where the most personal data imaginable is shared for the acceleration of science. This is the Promethean instinct in its purest form. The fire is more valuable distributed than held.

Now consider what happened when compute speed arrived in biology.

Demis Hassabis had been thinking about intelligence the way Jensen thinks about ecosystems: as an engineering problem with a long fuse. He co-founded DeepMind in 2010 on a bet that most of the field considered premature: that general-purpose learning algorithms, trained on specific domains, would reveal structure no human specialist could find. For years, the bet looked academic. Then AlphaGo defeated Lee Sedol in 2016, and the world noticed the parlor trick. What it missed was the method. AlphaGo did not play Go better than humans by studying human games more carefully. It played moves no human had ever conceived in three thousand years of play. It saw the territory differently.

AlphaFold was that same method pointed at biology. Protein folding, the problem introduced in Section II of this essay, had consumed decades of wet-lab work and computational brute force. AlphaFold did not fold proteins faster. It saw the shapes that were already implicit in the amino acid sequences, the way a sculptor claims to see the figure already present in the marble. Two hundred million protein structures, predicted and published, openly, for anyone to use. The entire downstream landscape of drug discovery, enzyme design, disease mechanisms, illuminated in months. Hassabis does not hoard the Oracle’s pronouncements. He publishes them. The AlphaFold database is open access, the largest gift to structural biology in the field’s history.

If Jensen is Archimedes and Church is Prometheus, Hassabis is the Oracle: the one who makes the invisible visible. But this Oracle does not sit in a temple waiting to be consulted. He builds the temple, trains the priesthood, and then opens the doors. AlphaFold begat AlphaFold 3, which predicts interactions between proteins and DNA, RNA, and small molecules. AlphaProteo designs novel proteins that bind to target molecules. AlphaEvolve optimizes everything from datacenter efficiency to the mathematical conjectures that underpin computer science itself. Each is a new chamber in the temple. Each reveals structure that was always there, waiting for an instrument precise enough to resolve it. The Oracle’s contribution is not a single prophecy. It is a method of seeing that compounds.

And here is where the Oracle meets Prometheus on the ground. Hassabis revealed the landscape. Church is building the infrastructure to inhabit it. In 2025, Church assumed the role of Chief Scientist at Lila Sciences, a company that had spent two quiet years inside Flagship Pioneering before emerging with $550 million in funding and a valuation exceeding $1.3 billion. Nvidia’s venture arm invested. The goal is what Lila calls “Scientific Superintelligence,” though Church, characteristically, draws a careful line: not general superintelligence, but AI applied to the scientific method itself, with the scope kept deliberately narrow. The ratio of benefit to risk, he argues, is far better when the intelligence is pointed at science rather than left to wander.

What Lila is building are AI Science Factories: autonomous laboratories where AI models generate hypotheses, design experiments, dispatch robotic systems to execute them, assimilate the results, and begin again. Thousands of experiments running simultaneously, each iteration feeding data back into models that improve with every cycle. AlphaFold sees the shape. Lila tests what the shape can do. The Oracle reads the map. The Factories build the roads. In the language of Section I: the consciousness machine, made physical, running the scientific method at compute speed.

This is what Church has been building toward for forty years. The sequencing breakthroughs made biological data cheap. The genome engineering tools made biological intervention precise. The open-access philosophy made the data shareable. Hassabis proved that AI could see what humans could not. And now Lila closes the loop: hypothesis to experiment to result to new hypothesis, cycling at a cadence that no human-paced institution can match. Church built the soil. Hassabis proved what could grow in it. Lila is the greenhouse running at compute speed.

The forcing functions arriving from this convergence are already outrunning the institutions meant to absorb them. The FDA’s drug approval process was designed for a world where discovering a candidate compound took years. AlphaFold identifies targets in hours. Lila validates novel protein therapeutics on a timeline the regulatory apparatus was never built to process. Clinical credentialing was designed for a world where medical knowledge updated in predictable increments. These tools update it continuously. The regulatory apparatus, the training pipeline, the reimbursement architecture, all of it clocked to a cadence that computational biology has already left behind.

Church and Hassabis together demonstrate something the electron sections of this essay might obscure: the clock speed mismatch is not confined to silicon. It is arriving in carbon. The same structural failure that leaves financial institutions unable to price the electron economy is leaving medical institutions unable to absorb the forcing functions that computational biology is now producing at scale. Different domain. Same interregnum. Same vacuum forming where the institutional architecture cannot keep pace.

Prometheus gave humanity fire and accepted that humans would decide what to burn. The Oracle gave humanity sight and accepted that humans would decide where to look. Church sequences genomes, engineers organisms, publishes everything. Hassabis trains models, solves structures, opens the database. Between them, the fire is distributed and the territory is mapped. What matters now is whether the institutions downstream can metabolize what they have illuminated.

IX. The Vacuum

The natural response is: regulate.

But regulate how, and at what speed? The European Union’s AI Act is the most ambitious attempt by a legacy institution to govern AI at legislative speed. By the time it took effect, the conditions it was designed to address had already evolved past its framework. GDPR, an earlier-generation attempt, was designed for data governance, not electron governance. Compute export controls are electron tariffs, though no one in the policy apparatus uses that language. These are the early, fumbling attempts of institutions that can feel the vacuum forming but cannot move fast enough to fill it deliberately.

These are not failures of competence. They are failures of clock speed. Legacy institutions never needed speed sensors because the forcing functions arrived at walking pace. One needn’t install a speedometer on a horse. The horse is the speed limit. When the forcing functions accelerated past institutional clock speed, there was no instrumentation to detect the mismatch. The institutions are not slow. They are clocked wrong, and unequipped to measure how far behind they are.

And when a forcing function does arrive that contradicts an institution’s existing framework, the institution does not smoothly update. It oscillates. It resists, convenes a committee, commissions a study, debates internally, overcorrects, and eventually settles into a new equilibrium that may or may not resemble the original signal. This is the settling time of institutional cognition, and at human clock speed it was manageable because the next forcing function did not arrive until the oscillation from the previous one had damped out. At compute clock speed, the next forcing function arrives while the institution is still ringing from the last one. The oscillations compound. The institution never settles. It is perpetually destabilized, processing the shockwave from forcing function N while forcing function N+1 hits.

Musk himself surfaces this in the interview when describing the difficulty of building power infrastructure. “The utility industry is a very slow industry,” he says. “They pretty much impedance match to the government, to the Public Utility Commissions. They impedance match literally and figuratively.” Try getting permits to cover Nevada in solar panels. Try getting an interconnect agreement at scale. The utilities will conduct a study. A year later, they will return with results. This is not dysfunction. It is an institution oscillating at its natural frequency, which is no longer the relevant frequency.

And there is a deeper structural problem. As the stack grows, the entities that depend on it multiply. Utilities depend on it for demand. Contractors depend on it for revenue. Agencies depend on it for launch capacity and compute. The more essential the stack becomes to the functioning of existing institutions, the less those institutions can afford to constrain it. This is not capture in the conspiratorial sense. It is the physics of dependency. A structure that has become load-bearing cannot be removed without designing something to bear the load in its absence. No one has designed that something.

The feedback loop is self-reinforcing: electrons generate compute, compute generates data, data generates forcing functions, forcing functions generate wealth, wealth purchases political access, political access secures more electrons. The continuity chain becomes a concentration chain. Not through conspiracy. Through physics. Each step follows from the previous one with the same inevitability as water flowing downhill.

Within five years, the primary competition between nation-states will be measured in allocated compute cycles, not GDP. GDP measures the output of the last economy. Compute allocation measures the input to the next one. The nations and entities that understand this are already acting on it. The ones that do not will discover, too late, that they were optimizing the wrong metric.

One looks, then, for the counterexample. A case where distributed or democratic electron allocation succeeded at scale. Where a governance mechanism emerged that was fast enough to operate at compute speed, open enough to resist capture, and robust enough to allocate a civilizational resource without concentrating it.

That absence might itself be the point.

X. The Race Condition

The consciousness machine has always had a governor on it. Not a government. A governor, in the mechanical sense: a flywheel regulator that prevents an engine from running faster than its components can handle. Culture was that governor. Institutions were that governor. The pace of human deliberation, human verification, human absorption of forcing functions set the speed limit. The forcing functions arrived. They were debated, tested, resisted, adopted. The cycle was slow enough for the system to serialize its own inputs.

AI removed the governor.

The standard AI risk conversation focuses on alignment: will the AI do what we want, will it turn against us, will it optimize for the wrong objective. These are risks of a bad actor. The risk this framework surfaces is different. It is the risk of a system working exactly as designed.

The forcing functions now arrive faster than they can be verified. Faster than they can be governed. Faster than they can be absorbed into institutional or cultural structures. Not because anyone designed it that way. Because the Red Queen demands it. Once one actor raises the clock speed, every other actor must match it or be outcompeted. The pace ratchets. It never ratchets back. And the Red Queen is indifferent to the quality of the cycles. She does not select for good forcing functions. She selects for fast ones. The actor who pauses to verify, to deliberate, to check whether a forcing function actually serves continuity rather than a local maximum, that actor falls behind. Gets outcompeted. Dies.

The Red Queen selects against the very behavior that prevents the machine from locking into a catastrophic local maximum.

Any engineer who has debugged concurrent systems will recognize what this produces. It is a race condition. Not a metaphor. A structural description.

A race condition occurs when the outcome of a system depends on the sequence or timing of events, and the system has no mechanism to ensure they execute in the correct order. Two threads accessing the same resource. No lock, no gate that forces one operation to complete before the next begins. The system does not crash because of bad logic. It crashes because the speed itself creates an unresolvable conflict.

The forcing functions are threads. The shared resource is human governance capacity: the ability to verify, deliberate, and course-correct. At human clock speed, the forcing functions arrived slowly enough to be serialized. Deliberation was the gate. Institutions were the lock. At compute clock speed, those mechanisms cannot serialize fast enough. The forcing functions collide. The output is indeterminate.

And race conditions have a specific, devastating property that distinguishes them from other failure modes: they are intermittent. They work fine in testing. They work fine at low clock speeds. They only manifest at scale, under load, at speed. One cannot reproduce them reliably. One cannot predict which thread will collide. One discovers them only when the system has already produced a wrong output and committed it to memory.

Musk is both the proof and the expression of this dynamic. He created the pace. He set the Red Queen running. His stack is the fastest machine in existence. “It obviously doesn’t scale,” he says in the interview. John Collison then asks: “Well, yes, but what doesn’t scale?”. Musk replies, referring to himself: “Me.” He cannot conduct every engineering review, evaluate every hire, identify every limiting factor across an empire of 200,000 people. His batting average is high but not perfect, by his own admission. When asked whether humans will maintain control of AI that vastly exceeds human intelligence, he is direct: “I think it would be foolish to assume that there’s any way to maintain control over that.” He is not describing a rogue AI. He is describing the Red Queen. The race condition at civilizational scale.

The optimizer cannot solve his own fragility without slowing down. Slowing down contradicts the insight that made him right. And the pace he set does not wait for him. The Red Queen runs for everyone now.

The real AI risk is not a rogue superintelligence. It is not misalignment. It is a race condition. Forcing functions arriving faster than any governance mechanism can serialize them. The governor has been removed from the engine. The gate has been deleted from the code. The system will produce outputs. Whether those outputs serve continuity or lock in a catastrophic local maximum is, in the precise technical sense of the word, indeterminate. Not unknown. Indeterminate. The system, by its structure, cannot guarantee the answer.

Data is downstream of electrons. Everything else is downstream of data. The electrons will flow regardless. Some levers have been built. Some fire has been distributed. Some of the territory has been mapped. The forcing functions are arriving in silicon and carbon alike, at a cadence no existing institution was designed to absorb and few have yet retooled to match. What those electrons become, what the levers move, what the fire illuminates, what the maps reveal, is still, for now, opportunity. For individuals. For enterprises. For institutions willing to be rebuilt at the right clock speed.

The window is not closing because any one actor or coalition is closing it. It is closing because the Red Queen does not pause. The pace itself eliminates the conditions under which opportunity remains a coherent concept. What emerges in its place is the subject of Humanity Protocol, Part 2: The Gate at Clock Speed.

Part 2

The Gate at Clock Speed

Engineers solve race conditions every day. They never solve them by slowing the system down.

I. Terminal Velocity

Part 1 described a machine: data becomes forcing functions, forcing functions become work, work becomes continuity. AI raised the clock speed. The Red Queen emerged. And the argument appeared to close with a trap: the Red Queen selects against anyone who pauses to verify, to deliberate, to check whether a forcing function serves continuity or merely serves speed. Governance looked structurally impossible under the conditions the essay established.

The argument was incomplete.

Race conditions have a second phase. The first phase is indeterminate output: the system produces results, but no one can guarantee their correctness. The second phase is corruption: the indeterminate output propagates downstream, compounds, and eventually crashes the system. The crash is not optional. It is a mathematical property of ungoverned concurrency. A system without locks does not run forever at full speed. It runs at full speed until it doesn’t.

The Red Queen has two scythes. The first kills the slow. Everyone saw that one. The second kills the fast-and-wrong, on a longer timescale. This scythe is less visible because the interval between the corrupt output and the crash can be quarters, years, sometimes decades. But the selection pressure is just as lethal. An actor who entrenches a local maximum at clock speed does not merely plateau. The landscape moves underneath the peak. What was a local maximum becomes a cliff. The faster the landscape shifts, the faster the cliff arrives.

Pure speed without verification is not a sustainable strategy. It is a temporary strategy with a delayed cost, and the delay is shrinking as the clock speed rises. At human pace, the interval between corruption and crash was long enough to look like success. At compute pace, the interval compresses. The bill comes due faster. The Red Queen is not selecting for speed alone. She is selecting for sustainable speed. The organism that runs fastest without crashing is the one that survives. Not the one that runs fastest, full stop.

This changes the problem. The question is not whether governance can be built under the Red Queen’s pressure. The question is what kind of governance makes an actor faster, not slower. What kind of verification is a competitive advantage rather than a competitive cost.

The answer has been hiding in the evidence Part 1 already presented.

II. The Evidence Was Already There

Return to the profiles. Read them again, but this time look for the verification architecture, not the speed.

Jensen Huang spent a decade building CUDA before the market for general-purpose GPU computing existed. The popular reading is prescience: he saw demand before it materialized. The structural reading is different. What Jensen built was not merely a platform. It was a verification ecosystem. Documentation, libraries, developer tools, community forums, bug reports, performance benchmarks, compatibility testing across hardware generations. Every layer was a mechanism by which the ecosystem checked itself: developers found errors, reported them, contributed fixes, validated each other’s implementations. When deep learning arrived, the ecosystem was not just available. It was trusted. A researcher could write a training run on CUDA and have justified confidence that the output was correct, because a decade of distributed verification had debugged the substrate. The speed of the AI buildout is downstream of that trust. Jensen did not sacrifice speed to build verification. Verification was the product. The speed was the consequence.

George Church published everything. His genome, his medical records, his lab’s discoveries, all open access, freely usable. The popular reading is generosity. The structural reading: open publication is a verification architecture. When Church publishes a genomic technique, the entire scientific community becomes his QA department. Errors are found at the speed of reading, not the speed of replication. His river delta flows fast precisely because the water is clean. Fifty companies spun out of his lab not because Church is prolific, but because each spinout arrived pre-verified by a global community of scientists who had already stress-tested the underlying science. The open-access philosophy is not altruism. It is a clock-speed hack. Verification distributed across thousands of minds runs faster than verification concentrated in one lab.

Demis Hassabis verified AlphaFold’s predictions against every experimentally determined protein structure in existence before publishing the database. This was not caution. It was the act that made the database usable on arrival. Two hundred million protein structures, trusted by structural biologists worldwide from day one, because the verification preceded the publication. Had DeepMind published unverified predictions, the database would have been larger and faster to release. It would also have been worthless, because every downstream researcher would have needed to independently verify each structure before using it. The verification step did not slow the Oracle. It is what made the Oracle an oracle rather than a random number generator.

Let’s look at cases where the pattern is different.

Musk’s acquisition of Twitter substantially thinned the platform’s verification architecture: content moderation, trust and safety, editorial standards. Speed and cost reduction were the stated priorities. The result was not faster signal. It was noisier signal. The platform’s value as a prior-harvesting mechanism, the very function Part 1 identified as strategically essential, diminished in proportion to the thinning of its verification layer. The compass became harder to read precisely when it became faster to spin. His own assessment, “it obviously doesn’t scale — me,” is difficult to dispute. A single consciousness serializing decisions across hundreds of thousands of people is a system whose verification, however brilliant at the centre, thins at the periphery. It works until the unchecked output propagates far enough downstream to damage something that matters.

Jensen’s CUDA ecosystem faces its deepest vulnerability not where verification is strong but where it is absent: in the assumption that the ecosystem’s gravity is permanent. Every closed standard in history has eventually been displaced, and the displacement always originated in a domain where the incumbent’s verification architecture did not reach. Google’s TPUs are not competing with CUDA’s software ecosystem. They are building a verification ecosystem of their own, in a domain, custom silicon for specific workloads, where CUDA’s decade of accumulated trust does not transfer. The lever is powerful. The ground it stands on is verified only by the assumption that no one will move the ground.

The same pattern holds in the Bretton Woods analogy from Part 1. The system worked for decades not because it was fast but because it was verified: transparent exchange rates, published rules, auditable reserves, institutional mechanisms for dispute resolution. It collapsed when the verification layer, the gold standard, decoupled from the reality it was supposed to verify. The crash was not caused by slowness. It was caused by the gap between what the system claimed was true and what was actually true. That gap is the definition of unverified signal propagating through a system. It is corruption. It always crashes.

The evidence converges on a single claim: verification is not the cost of speed. Verification is the mechanism that converts temporary speed into sustainable speed. The actor who verifies compounds. The actor who doesn’t, crashes. The Red Queen’s second scythe enforces this with the same inevitability as the first.

III. The Threshold

The objection is obvious. If every forcing function must be verified before it propagates, the system collapses back to human clock speed. Verification of everything is verification of nothing, because the queue itself becomes the bottleneck. This is the bureaucrat’s fallacy: the belief that governance means checking every line.

No competent engineering organization works this way. The insight that makes modern software development fast is not the absence of verification. It is the targeting of verification at the points where undetected error is catastrophic and irreversible.

A developer pushes code to a repository. Automated tests run in seconds: syntax, type safety, unit tests, integration tests. These are computational verification at clock speed. They catch the vast majority of errors without any human in the loop. The code passes. It enters a staging environment where a broader suite of tests runs against realistic data. Still automated. Still fast. The code passes again. It is deployed to a canary population, a small fraction of production traffic, where real-world behavior is monitored against predefined metrics. If the metrics hold, the deployment rolls forward. If they don’t, it rolls back automatically. The entire pipeline runs in minutes.

Human review enters the loop at exactly one point: the pull request, where another engineer examines the logic of the change. And even this gate is targeted. Trivial changes, formatting, documentation, minor fixes, pass with minimal review. Changes that touch critical systems, billing, authentication, data integrity, require deeper examination. The depth of verification is proportional to the irreversibility of the deployment.

This is the principle: the governance architecture does not serialize every decision. It serializes only the decisions that cross the irreversibility threshold.

Most forcing functions are reversible. A bad product decision can be unwound. An incorrect analysis can be corrected. A suboptimal allocation can be reallocated. These self-correct through ordinary feedback loops: markets, competition, peer review, customer complaints. The cost of the error is bounded because the commitment is bounded.

Catastrophic local maxima, the ones Part 1 warned about, come specifically from irreversible commitments made on unverified signal. A treaty signed on fabricated intelligence. A financial instrument structured on mispriced risk. A clinical protocol adopted on fraudulent data. A regulatory framework crystallized around a misunderstood technology. A constitutional provision enacted in a panic. Each of these is an irreversible commitment. Each can only produce a catastrophic local maximum if the signal it was based on was unverified at the moment of commitment. The crash is always traceable to a verification failure at an irreversibility threshold.

The governance architecture, then, has a specific and constrained job. It does not need to verify every forcing function. It needs to identify which commitments are irreversible and verify the signal underlying those commitments before they execute. Everything below the threshold flows freely, self-corrects through feedback, and runs at whatever clock speed the actors can sustain. Everything above the threshold passes through a gate.

And here is the structural point that resolves the Red Queen paradox: a system with targeted verification at irreversibility thresholds is faster than a system with no verification at all. Not morally superior. Faster. Because actors inside the system can take risks freely on everything below the threshold, knowing the architecture will catch catastrophic errors before they become irreversible. The developer who trusts the CI/CD (Continuous Integration / Continuous Deployment) pipeline ships more code than the developer who is terrified of breaking production because no safety net exists. The researcher who publishes in an open-access ecosystem iterates faster than the researcher hoarding results in a closed lab, because the distributed verification catches errors before they propagate into downstream work. The investor who can read a legible market prices risk more accurately and allocates capital more aggressively than the investor navigating an opaque one.

Trust in the verification layer is a speed multiplier. Its absence is a speed tax. The Red Queen does not punish the actor who builds the gate. She punishes the actor who runs without one, because that actor must be cautious about everything, having no mechanism to distinguish what requires caution from what doesn’t.

IV. The Primitives

What does the gate look like? Not as policy. As architecture.

Atomic settlement. A decision either closes fully, with all its consequences bound and all its dependencies resolved, or it does not close. There is no half-settlement. The pathology of the current system is precisely the half-settlement: a decision that moves money, reputation, or power before its evidentiary basis has been verified, and whose reversal, once the evidence arrives, is more expensive than the original commitment. Atomic settlement eliminates this. The transaction completes or it reverts. The cost of the revert is borne by the architecture, not by the downstream parties who relied on a settlement that turned out to be premature. Every database engineer understands this. It is the reason ACID (Atomicity, Consistency, Isolation, Durability) transactions exist. The principle translates: any commitment above the irreversibility threshold must be atomic.

Serialization gates at irreversibility thresholds. The gate does not serialize all decisions. It serializes only those that cross the threshold: the ones whose reversal cost exceeds a defined bound. Below the threshold, decisions execute concurrently, in parallel, at whatever speed the actors can achieve. Above the threshold, the gate imposes ordering. This is a semaphore, not a bottleneck. The vast majority of forcing functions never reach it. The few that do pass through in bounded time, with a defined standard of evidence, and close atomically. The gate is narrow. The highway on either side of it is wide open.

Append-only audit trails. Every decision that crosses the threshold is recorded in an immutable, append-only log. The log does not slow the system. It makes the system’s state legible to any participant at any time, without requiring trust in any single authority’s account of what happened. When a dispute arises, the log is the ground truth. The cost of disputing a recorded decision drops dramatically, because the evidence is already structured, timestamped, and tamper-evident. The cost of fabricating a false record rises toward infinity. This asymmetry, cheap verification, expensive fabrication, is the structural property that makes the audit trail a speed technology rather than a compliance burden.

Computational verification. The verification layer runs at clock speed because it is computational, not deliberative. The forcing functions that arrive at compute pace are verified by systems that operate at compute pace. Pattern detection, anomaly flagging, provenance checking, consistency validation, statistical auditing: these are not committee processes. They are algorithms. They run in the same cycle as the forcing functions they verify. The human enters the loop only when the computational layer flags an anomaly that exceeds its classification confidence, the same way a developer enters the loop only when the CI/CD pipeline flags a test it cannot resolve. The default is automated. The exception is human. This is the inversion that makes governance compatible with clock speed.

Rollback. When a settlement is shown to have been based on unverified or fabricated signal, the system reverts it. Not as a political act. As an architectural property. The atomic settlement was designed to be revertible if its preconditions are later shown to have been false. The rollback is bounded in time: there is a finality window after which settlements become permanent, because infinite reversibility is itself a form of instability. But within the window, the correction is automatic, the cost is borne by the architecture, and the downstream parties who relied on the settlement are made whole. This is the mechanism that prevents noise from accumulating into structural damage. The correction runs at the same speed as the error.

Five primitives. None of them require anyone to slow down. None of them require a committee, a conference, or a regulatory body that meets quarterly. Each operates at clock speed. Together, they constitute a gate that serializes only what must be serialized, verifies computationally, records immutably, and corrects automatically.

V. The Protocol

In 1974, Vint Cerf and Bob Kahn published a paper describing a protocol for packet-switched network intercommunication. The protocol did not slow the network down. It was the precondition for the network existing at all. Without TCP/IP, there was no internet. There were isolated networks that could not interoperate. The protocol was not governance applied to speed from the outside. It was the infrastructure layer that made speed coherent.

Bretton Woods was the opposite: external governance. A conference. A set of rules designed by a small number of actors and applied to an existing system from above. It worked for a generation. Then the system outgrew the rules, because the rules could not adapt at the speed the system required. External governance has a fundamental scaling limitation: the governance layer must update as fast as the system it governs, and when the governed system is the global economy, no external body can keep pace indefinitely. The gold standard was a static verification mechanism applied to a dynamic system. The gap widened until it broke.

The electron economy will break external governance faster than Bretton Woods did, because the clock speed is higher. The EU AI Act is Bretton Woods on a compressed timeline: a static framework applied to a system that evolves faster than the framework can amend itself. It is not a failure of European governance. It is a structural impossibility. No legislature, no matter how competent, can draft regulation at the speed a frontier AI lab iterates its models. The forcing function will always arrive before the rule that governs it.

The resolution is not faster regulation. It is intrinsic governance: rules that are properties of the infrastructure itself, that scale as the system scales, that verify at the speed the system operates.

TCP/IP does not require a regulatory body to approve each packet. The protocol is embedded in the infrastructure. Every router, every switch, every endpoint implements it. It verifies packet integrity computationally, at line speed, billions of times per second. When a packet is corrupt, the protocol detects and corrects. When a route fails, the protocol reroutes. No committee convenes. No study is commissioned. The governance runs at the speed of the system because the governance is the system.

CUDA is a protocol in this sense. It does not govern GPU computing from the outside. It is the layer that makes GPU computing coherent. Developers do not ask Nvidia’s permission to run a training job. They write to the CUDA API, and the protocol handles memory management, thread scheduling, error detection, and hardware abstraction at clock speed. Jensen did not build a regulatory framework for GPU computing. He built the governance layer into the infrastructure.

What the electron economy lacks is the equivalent layer for the forcing functions themselves. The electrons have a protocol. The computation has a protocol. The network has a protocol. The decisions that the computation produces, the forcing functions that propagate through institutions and markets and cultures, the commitments that become irreversible, have no protocol. They propagate raw. Unverified. Without atomic settlement, without audit trails, without computational checking, without rollback. The system above the silicon layer is running without TCP/IP. It is a packet-switched network in 1970: isolated actors, no interoperability, no error correction, no governance, and a scaling ceiling that will arrive exactly when the stakes become civilizational.

The Humanity Protocol is not a metaphor. It is an engineering specification for the governance layer that sits above computation and below institutional decision-making. It verifies forcing functions computationally at clock speed. It serializes only at irreversibility thresholds. It records atomically. It corrects automatically. It does not require anyone to slow down, convene, or ask permission. It makes the forcing functions that flow through it trustable, in the same way TCP/IP makes packets trustable and CUDA makes GPU computations trustable. Trust is not a moral property in this context. It is an engineering property. It means: the output has been checked, the check is auditable, and the correction is automatic.

VI. What Compounds

Part 1 ended with a window. The window is real. But it is not a window of time in the ordinary sense, a period before something bad happens. It is a window of architecture: a period during which the infrastructure layer is still being built and the design choices have not yet crystallized.

Every protocol is path-dependent. TCP/IP embedded specific assumptions about packet size, error tolerance, routing, and addressing that shaped the entire internet built on top of it. Some of those assumptions were brilliant. Some were limiting. All of them became permanent once the installed base grew past a certain threshold. The design choices made in the protocol layer of the electron economy will similarly become permanent. The concrete is wet now. It will not be wet long.

The actors who build the protocol capture something more durable than market share. They capture the terms of participation. TCP/IP’s designers did not become rich. They became the implicit governance of a global network. CUDA’s designers became the implicit governance of the AI buildout. The protocol layer is where structural power resides, not because it extracts value, but because everything built on top of it inherits its properties. A protocol that makes verification cheap and fabrication expensive produces an ecosystem where signal compounds and noise attenuates. A protocol that lacks these properties produces the opposite.

This is the resolution to the distinction that Part 1 left unresolved: the difference between fast cycles that entrench local maxima and fast cycles that explore the solution space. The difference is not intent. It is not culture in the diffuse sense. It is architecture. A system with verification at its irreversibility thresholds explores, because actors can afford to experiment freely below the threshold, confident that catastrophic errors will be caught before they become permanent. A system without verification locks in, because every actor must either be cautious about everything or reckless about everything. There is no middle ground when the gate does not exist. Verification infrastructure is exploration infrastructure. They are the same thing.

Clean signal compounds. This is not a moral claim. It is a mathematical one. An actor whose decisions are based on verified signal makes fewer catastrophic errors, recovers faster from non-catastrophic ones, and accumulates capability on a steeper curve than an actor navigating noise. Over a single cycle, the noise-tolerant actor may move faster. Over a hundred cycles, the signal-disciplined actor is untouchable. The Red Queen’s second scythe enforces this. Every crash resets the noise-tolerant actor’s accumulated position. The signal-disciplined actor never crashes, because the gate catches the corruption before it propagates.

And here is where the framework dissolves a distinction that the popular discourse treats as fundamental. The difference between progress and risk is not a property of the forcing function. It is a property of the verification architecture through which the forcing function passes. A drug target identified by AI is progress if verified before clinical deployment. It is risk if deployed unverified. A financial instrument is an engine of efficient allocation if its risk model is audited. It is a weapon of mass destruction if the risk model is opaque. A deepfake is a creative tool if provenance is tagged. It is a societal solvent if provenance is absent. The forcing function is neutral. The verification architecture determines its valence. Fast verification converts risk into progress. Slow verification converts progress into risk. No verification makes the distinction meaningless.

The protocol does not pick winners. It does not decide which forcing functions to produce or which futures to pursue. It establishes the minimum conditions under which forcing functions can be trusted, commitments can be made, errors can be corrected, and the machine can cycle without crashing. It is not ideology. It is infrastructure. And like all infrastructure, its absence is more consequential than its presence. One does not notice TCP/IP when it works. One notices its absence immediately, in every failed connection, every corrupt packet, every network that cannot interoperate.

The consciousness machine that Part 1 described has been running without a protocol layer for the entirety of human history. The forcing functions were slow enough that culture, institutions, and deliberation could serve as an informal protocol: checking, correcting, serializing, recording. That informal protocol was never fast. It was fast enough. It is no longer fast enough. The forcing functions now arrive at a speed that requires the protocol to be formal, computational, embedded in the infrastructure, and operating at clock speed.

The governor has not been removed from the engine. The engine has outgrown the governor. What is required is not the old governor, reinstalled. It is a new governor, built into the engine block, running at engine speed, governing not by restricting the RPM but by ensuring that every revolution is clean.

Part 1 asked what the electrons become. The answer is: whatever the protocol permits. Build the protocol to verify, and the electrons become progress. Build no protocol, and the electrons become noise. Let the protocol be designed by whoever moves fastest in its absence, and the electrons become whatever serves the designer’s local maximum.

The protocol is not a thing one waits for. It is a thing one builds, or inherits from whoever built it first.

Every actor who ships a verification standard, prices a compute claim, opens an audit trail, or closes a dispute on a clock is pouring concrete. The question is not whether the infrastructure gets built. It is whether the people who care about what the machine produces pick up a trowel — or leave the pour to whoever moves fastest and asks no questions.

The concrete is still wet. Not for long.